You're Wasting Your Context Window

Most people paste nothing into their AI conversations. Power users paste everything. Here's the difference it makes.

Claude has a 200K token context window. GPT-4o has 128K. Most people use about 50 tokens of it — their question.

That's like having a 1TB hard drive and storing one text file.

The power user difference

Watch someone who's productive with AI tools. Before they ask a question, they paste context:

- Their project's tech stack and architecture

- The specific file or function they're working on

- What they've already tried

- How they want the response formatted

The AI's response quality jumps dramatically because it's not guessing — it knows the situation.

The problem: assembling context is tedious

Copy-pasting context from multiple sources for every conversation is a chore. You need your project readme, the relevant code file, your coding preferences, and maybe a previous AI response about the same topic.

By the time you've assembled all that, you've lost your train of thought.

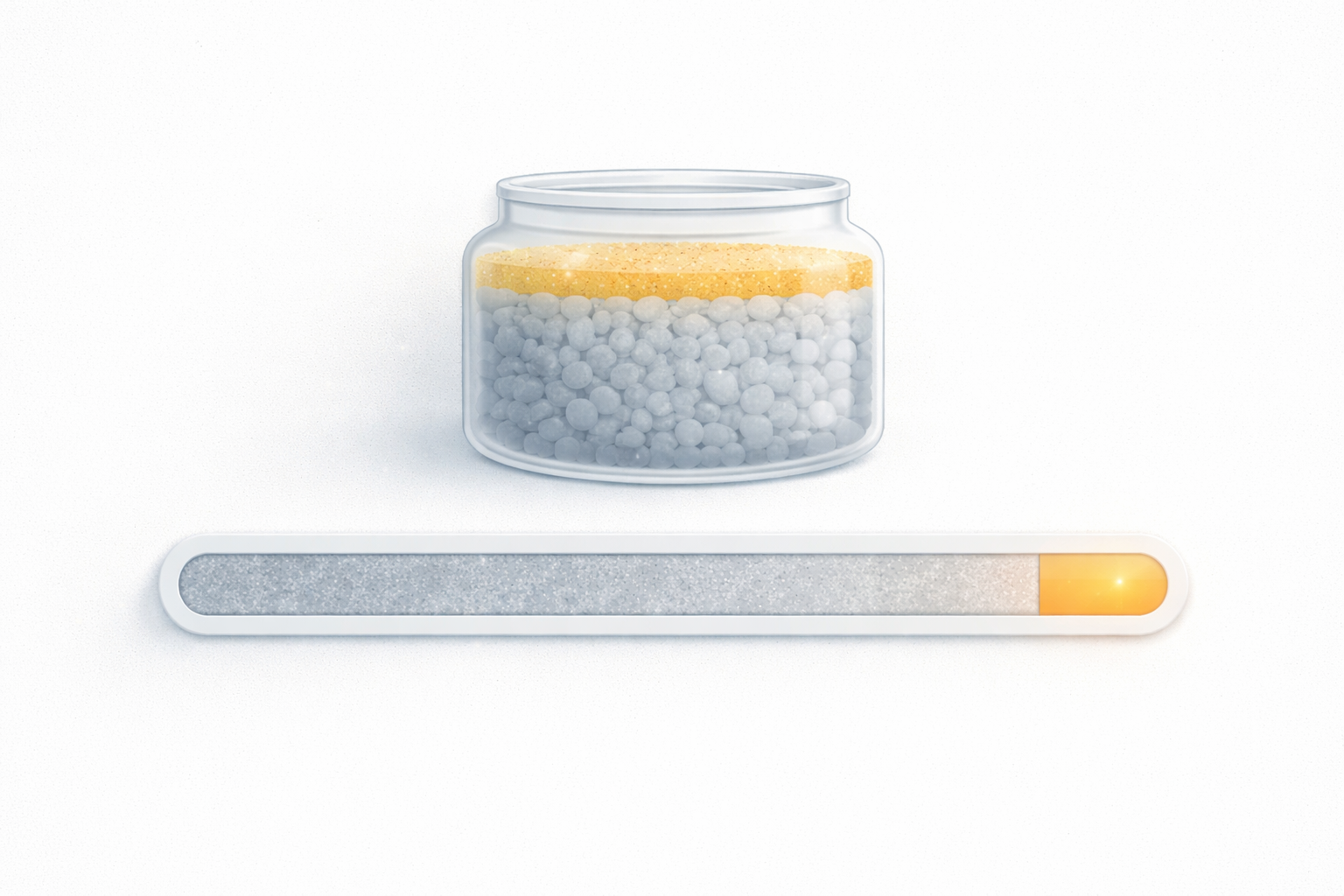

Context Packs: one-click context injection

This is why we built Context Packs in Helium. Bundle related cards, conversations, and your personal context into a formatted block. One tap copies everything to your clipboard, formatted exactly how the AI expects it — markdown, XML tags, JSON, or plain text.

A Context Pack for "iOS Build Issues" might include:

- Your 3 cards about Xcode archive problems

- The conversation where you solved the provisioning profile issue

- Your My Context with your Apple Developer setup

One copy-paste, and the AI has everything it needs.

Your context window is your superpower

The developers getting the most out of AI aren't writing better prompts — they're providing better context. The context window is the most underused feature in every LLM.

Fill it.